Introduction

2022 was a turbulent year, especially with the onset of the war in Ukraine. For tech businesses, the year brought uncertainty, layoffs, and financial hardships.

Yet, amidst all the chaos, it was evident that many organizations displayed resilience, adaptability, and innovation in order to survive the unprecedented times.

Companies shifted operations to cloud, optimized the number of staff by firing thousands of IT specialist, embarked on Platform Engineering and DevSecOps cultures, started looking deeper into AI.

I’ve gathered a few essential innovations that must be on your radar this year.

Development experience and deployment workflow tools

Infrastructure-as-a-Code approach enables every new deployment with its own infrastructure like data and storage, ensuring the security and governance tools are already in place. But how do developers could get more benefits from Infrastructure-as-a-Code approach and start using it avoiding high entry threshold?

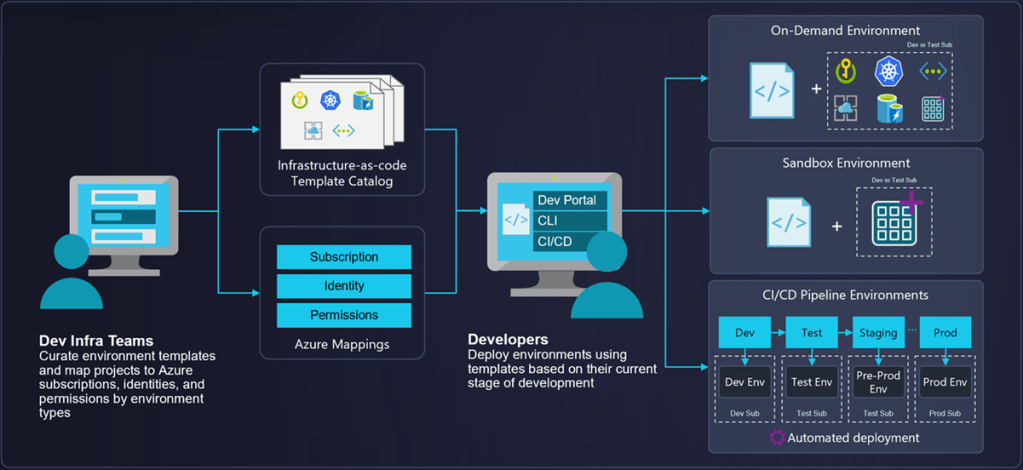

Deployment workflow tools like Azure Deployment Environments (ADE) or AWS Proton could be the answer.

Microsoft announced the public preview of Azure Deployment Environments (ADE). Azure Deployment Environments help developers to save their time and spin up on-demand app deployment environments using infrastructure-as-code templates. Templates are built as ARM (and eventually Terraform and Bicep) files and kept in source control repositories with versioning, access control, and pull request processes, making it easy for developers to collaborate across teams.

Announced Azure Deployment Environment introduces an avenue for simplifying and accelerating on-demand app deployments using Infrastructure-as-a-Code templates. This is a managed service that works with the existing development platform and natively integrates with your CI/CD. Azure Deployment Environments work with ARM-based templates and, eventually, Terraform and Bicep.

Key Azure Deployment Environment benefits are:

- Cost Savings: Azure Deployment Environments provide cost savings over traditional on-premises solutions. By using cloud-hosted solutions, customers can save money on physical hardware and maintenance costs.

- Scalability: ADE ensures the scalability needed to quickly deploy and scale applications in the Azure cloud, with the ability to customize resources to fit specific needs.

- Automation: Multiple automation features are available within Azure Deployment Environments, such as automated deployment, patching, and configuration management.

- Improved Security: ADE provides high levels of security and compliance, including built-in protection against data loss and unauthorized access.

AWS Proton is a fully managed service from Amazon Web Services (AWS) that helps customers centrally manage and automate application delivery and life-cycle management. Proton simplifies the process of deploying and operating applications, allowing customers to quickly launch, update, and roll back application changes. This helps customers ensure application reliability, improve operational efficiency, and reduce costs. Proton can be used to orchestrate changes across a range of AWS resources, such as EC2, S3, and ECS.

Among the key benefits, there are two essentials:

- Time Savings: AWS Proton helps to reduce the time and effort associated with setting up and operating your applications in the cloud. With Proton, you can access pre-configured templates and automation to quickly launch a DevOps pipeline and deploy applications with just a few clicks.

- Cost Reduction: Proton helps to reduce operational costs associated with running applications in the cloud by eliminating the need to manually configure infrastructure components. This results in lower costs, since AWS Proton automates the process of managing infrastructure components.

Need to spin up an environment to test some code? Automate it as a part of your pipeline? Simply pick a needed template from the library and let Deployment workflow tools do the magic.

AI-Assisted Development Tools

2022 was the year of AI with GPT-3 that crafts (sometimes pretty engaging) texts, DALL E 2 which creates realistic images and art from the description in natural languages, and Google’s AI LaMBA that “has come to life”.

But let’s see how AI has boosted Software development with AI-Assisted development tools.

AI-assisted development is a concept that helps users to speed up writing code with using the expertise learned from millions of code patterns, recommending the correct tools and patterns for any situation to the developer.

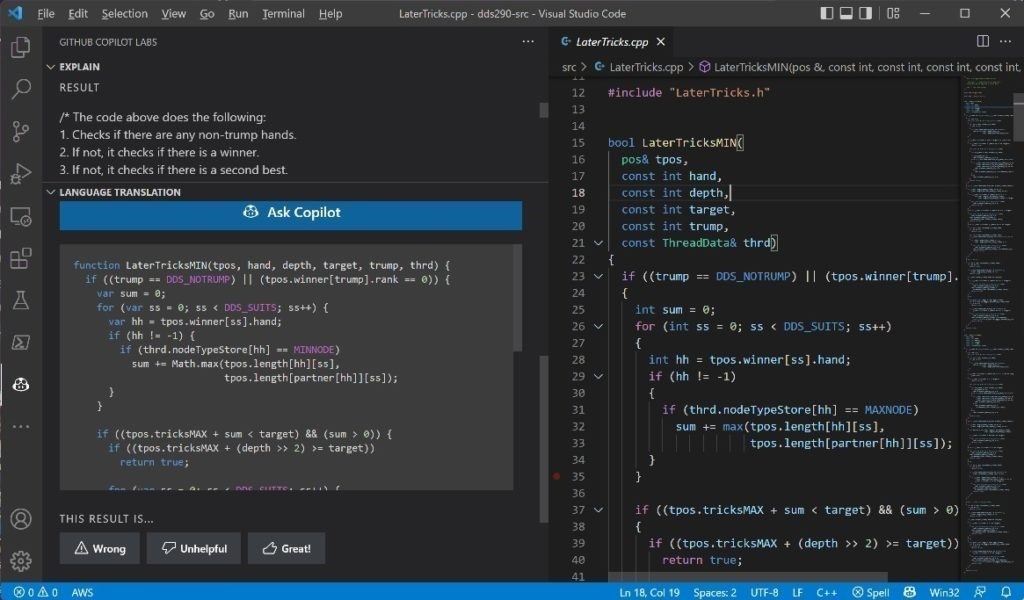

This year both Microsoft and Amazon released their products: GitHub Copilot and Amazon CodeWhisperer. GitHub Copilot is a new service from GitHub and OpenAI that uses OpenAI Codex to suggest code snippets and entire functions in real-time right from your editor. Technically, it is a VSCode plugin that auto-generates code for you based on the contents of the current file, and your current cursor location.

It is a good way for experienced developers to save time spent on googling and stackoverflowing things and be more productive. I use it to auto-fill some routine and boilerplate in my code and I do not use it for new syntax things or an ultimate cheat sheet.

Though Copilot supports a plethora of languages (Java, C, C++, C#, Python, JavaScript, TypeScript, Ruby, and Go), I would not recommend it to people who are new to programming. Offloading your code writing to Copilot won’t help you in the long term.

Copilot is public and available for $10 USD/month or $100 USD/year. It will also be free to use for verified students and maintainers of popular open-source projects. Microsoft says that writing a web server in JavasScript takes 55% less time with Copilot.

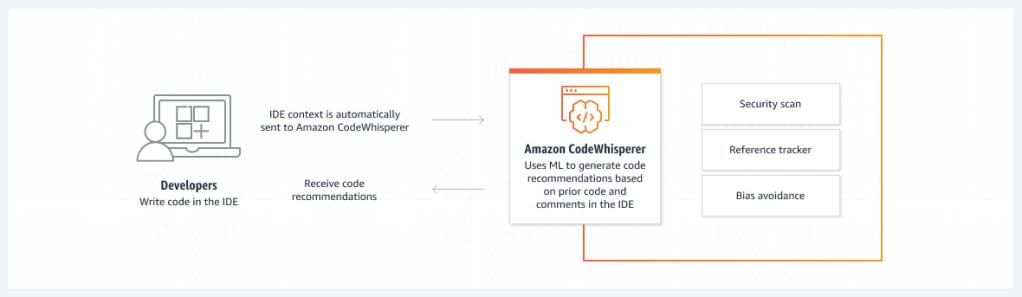

Amazon CodeWhisperer is a suite of tools for Amazon Web Services (AWS) users to help in their management and maintenance of AWS-based applications. It uses machine learning algorithms to detect cloud configuration issues and coding errors, providing real-time recommendations and alerting users to potential issues early.

With CodeWhisperer, users can troubleshoot problems faster, reduce AWS costs, and optimize applications for better performance. Benefits include improved cloud security, increased uptime and performance, and fewer manual management tasks.

You can also get faster error resolution as it uses artificial intelligence to detect code errors and provide cleaner code. This speeds up the development process, reducing the amount of time a developer spends troubleshooting issues.

Recommended further reading: https://www.outsystems.com/glossary/what-is-ai-assisted-development/

Multi-Cloud/Cross-Cloud

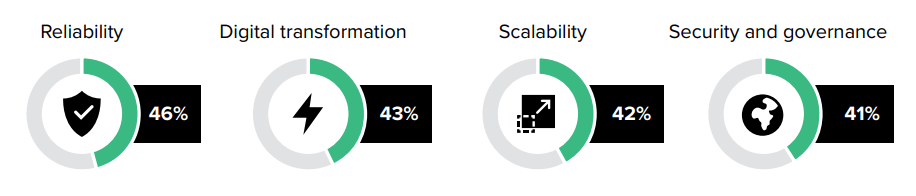

Multi-cloud is helping IT decision-makers accomplish their business goals, but the complexity of managing multiple technologies, applications, and APIs, as well as developing processes around them, breeds operational challenges. Without a centralized approach to managing complex multi-cloud operations, skills shortages siloed teams, and other factors can exacerbate security risks

According to the IBM MultiCloud report in 2020, the cost of outages ranged from $100,000 to $1 million with 16% of outages costing more than $1 million.9 That said, leaders have been consistent about the primary driver being applications and services. It’s also likely that leaders are architecting their applications for resiliency, mitigating risk when an outage does occur and allowing them to focus on the applications and services that they can leverage to differentiate their value proposition.

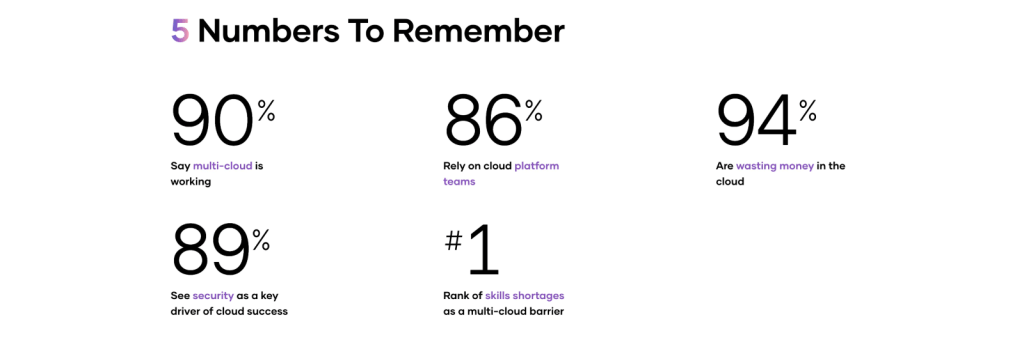

Hashicorp also says that multi-cloud adoption keeps growing, and the rise of cloud platform teams is already helping enterprises derive significant business benefits from the strategy. The ongoing importance of cloud security, and how the shortage of required skills is affecting the way companies operationalize multi-cloud. Breaking new ground, the 2022 survey delves into the increasingly critical role played by cloud platform teams and why so many organizations are still wasting money in the cloud.

Tools like Terraform are the drivers of multi-cloud adoption. Example of installation to provision Kubernetes clusters in both Azure and AWS environments using their respective providers.

The multi-cloud concept was often discussed in 2022 together with Platform Engineering or Platform Team concept.

Here’s what I love most about it.

Recommended further readings:

https://developer.hashicorp.com/terraform/tutorials/networking/multicloud-kubernetes

https://www.hashicorp.com/blog/hashicorp-state-of-cloud-strategy-survey-2022-multi-cloud-is-working

https://www.ibm.com/downloads/cas/L9K1MK1Y

https://hashi.co/3zuHYg5

Platform as a Service

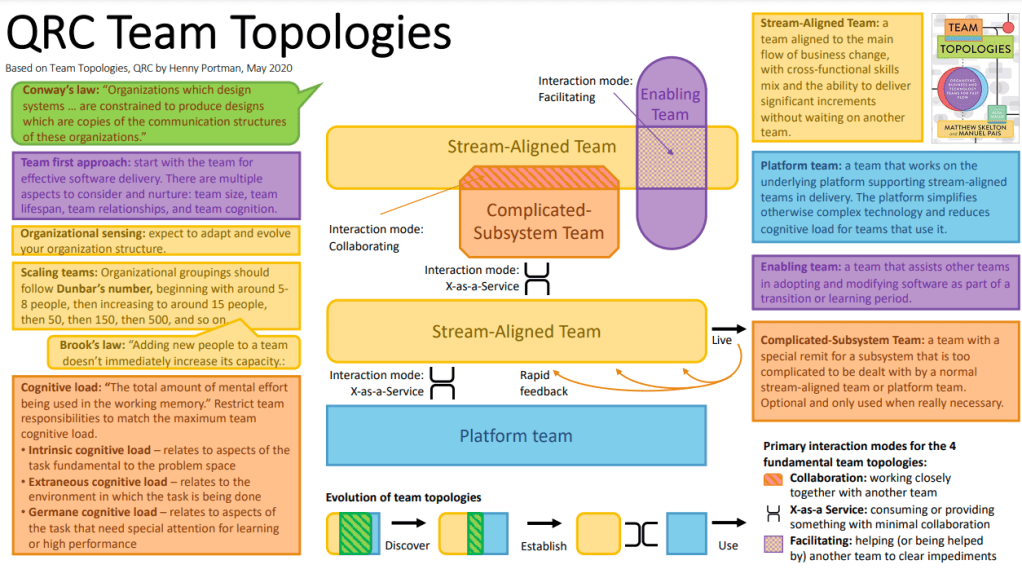

Platform Engineering or Platform Team concept was described long before 2022, Spotify uses “Golden Paths” for a long time, Netflix has “Paved Roads”, Matthew Skelton and Manuel Pais described this approach and cultural aspects in the book “The Team Topologies”

Puppet State of DevOps Report 2021 describes this in the following way – Digital platform is a foundation of self-service APIs, tools, services, knowledge, and support which is arranged as a compelling internal product. Autonomous delivery teams can make use of the platform to deliver product features at a higher pace, with reduced coordination.

The platform model enables self-service for developers and curates the developer experience. A highly effective platform provides a guided experience for the customers of the platform and that platform is treated as a product. It enables stream-aligned team members to focus on the things most important for their customers and get common building blocks and tools from the platform. Its purpose is to ensure delivery is smoother and faster.

On the other side of the coin, there is a Platform-as-a-product (PaaP), which is a type of cloud computing technology that provides a web-based platform on which businesses can develop, deploy, and manage applications, services, and data. A PaaP typically includes a set of software development kits (SDKs), APIs, and services that allow for easy development and management of applications.

Through the use of PaaP, businesses can quickly create, deploy, and scale applications without having to build and maintain the underlying infrastructure or commit to extensive hardware and software investments. On top of that, PaaP allows using pre-built components to quickly configure and deploy customized solutions.

Deploying applications using this model reduces the need for specialized IT staff, which can be both time-consuming and expensive. In addition to cost savings, Platform as a Product offers convenience, as users can access their apps and data from any device, whether it’s a laptop, tablet, or phone.

The enhanced option within the PaaS spectrum are Self-service platforms.

These are digital interfaces that allow users to access services and products that they need without relying on an intermediary. These platforms allow users to purchase products, book services, access information, and complete other activities without the need for a human agent or third-party service provider. Examples of self-service platforms include mobile banking applications, online shopping sites, airline booking portals, and digital entertainment apps.

Among the key benefits of Self-service platforms, there are:

- Reduced Cost – they can reduce the expenditures of customer support. Companies won’t need to hire customer service representatives to handle client inquiries.

- Improved Efficiency – Self-service platforms allow users to quickly and easily find answers to their questions. This saves time for both customers and customer service teams.

- Increased Customer Satisfaction – such platforms can provide customers with more control over their support experience. Customers can quickly and easily get the help they need without having to wait for customer service representatives.

Serverless Databases

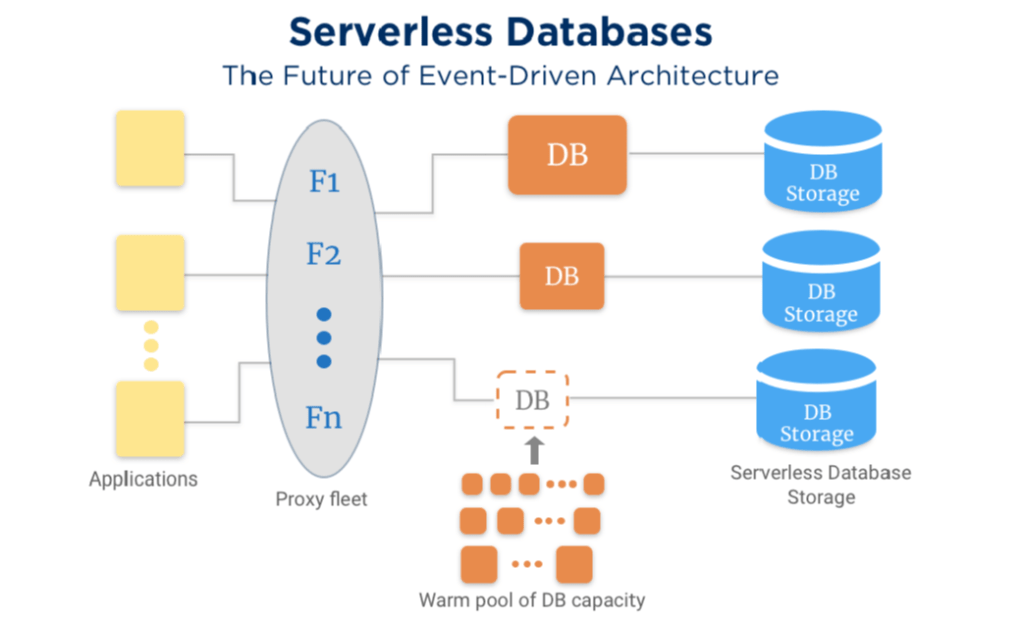

Public cloud providers like AWS, Azure, GCP increase in their offerings Serverless and Distributed SQL databases for cloud-native applications.

At the beginning, Serverless capabilities were associated only with application development, cloud services like AWS Lambda and Azure Functions were introduced a long time ago, but then Cloud Native applications began to require the same Serverless flexibility for storing and processing data in serverless, event-driven, micro-service approaches.

Amazon Redshift added serverless to reduce the operational burden, remove the need to set up and manage infrastructure and get insights by querying data in the data warehouse.

Azure offers SQL Database serverless that optimizes price-performance and simplifies performance management for databases with intermittent, unpredictable usage. Serverless automatically scales compute for single databases based on workload demand and bills for compute used per second, so you only pay for what you use.

A typical serverless database use case could be creating a user database for an application that needs to store and retrieve user data. This can be done using a serverless NoSQL database, such as Amazon DynamoDB. With a serverless database, there is no need to deal with maintaining servers, such as setting up hardware, managing database backups, and upgrading software. All of these database management tasks are handled by the serverless platform, making it easy to add, delete, and update data.

Usually, serverless databases are being leveraged by:

Online Shopping – Serverless databases are ideal for eCommerce applications that don’t require a huge amount of data storage. Using a serverless system allows businesses to use a more cost-effective model and easily scale up when needed.

Mobile Applications – Serverless databases are great for mobile app development since they are lightweight and need minimal configuration steps. They also allow for easy development and deployment on all platforms.

IoT Data Storage – IoT devices generate a huge amount of data and require an efficient backend to manage tons of information without sacrificing the platform experience.

eBPF

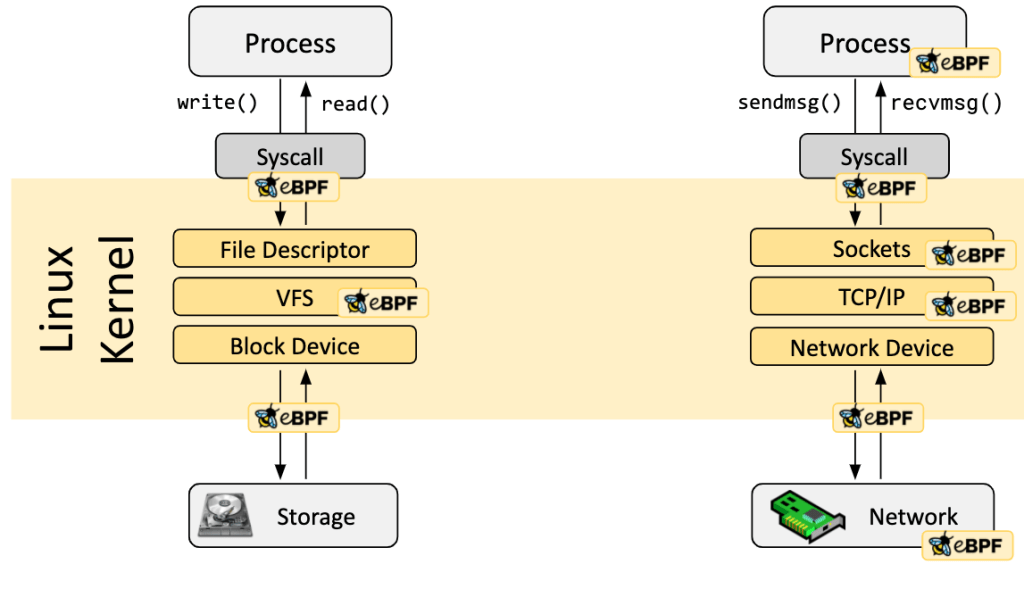

eBPF is a revolutionary technology with origins in the Linux kernel that can run sandboxed programs in an operating system kernel. It is used to safely and efficiently extend the capabilities of the kernel without requiring changing kernel source code or load kernel modules.

Historically, the operating system has always been an ideal place to implement observability, security, and networking functionality due to the kernel’s privileged ability to oversee and control the entire system. At the same time, an operating system kernel is hard to evolve due to its central role and high requirement for stability and security. The rate of innovation at the operating system level has thus traditionally been lower compared to functionality implemented outside of the operating system.

eBPF is fully available since Linux 4.4 and lets programs run without needing to add additional modules or modify the kernel source code. You can conceive of it as a lightweight, sandboxed virtual machine (VM) within the Linux kernel. It allows programmers to run Berkeley Packet Filter (BPF) bytecode that makes use of certain kernel resources.

Utilizing eBPF removes the necessity to modify the kernel source code and improves the capacity of software to make use of existing layers. Consequently, this technology can fundamentally change how services such as observability, security, and networking are delivered.

Some services started supporting eBPF and introduced many improvements

Calico offers support for Linux eBPF, Calico’s eBPF data plane scales to higher throughput, uses less CPU per GBit, and has native support for Kubernetes services (without needing kube-proxy).

Katran is a load balancer with a completely reengineered forwarding plane that takes advantage of two recent innovations in kernel engineering: eXpress Data Path (XDP) and the eBPF virtual machine. Katran is deployed today on backend servers in Facebook’s points of presence (PoPs), and it has helped us improve the performance and scalability of network load balancing and reduce inefficiencies such as busy loops when there are no incoming packets.

I am sure that in the future we will see much more other cool projects that use eBPF.

Recommended further readings:

https://engineering.fb.com/2018/05/22/open-source/open-sourcing-katran-a-scalable-network-load-balancer/

https://www.tigera.io/learn/guides/ebpf/

https://ebpf.io/

Log4J vulnerability

2022 Started with Log4J vulnerability which made a big shock to both business and open source companies.

Log4j vulnerability was an exploit of Apache Log4j, a library used for logging in Java applications, and a renowned attack that was used to inject malicious code into web applications.

This vulnerability occurred because the library’s logging methods did not properly validate user input data before being logged. The vulnerability allowed attackers to inject malicious code into the log files and execute the code when the log files were processed, which could result in serious security issues. In addition, attackers could potentially gain access to sensitive information stored in the application’s log files.

Given that it affected anyone who used log4j, an open-source logging tool, the vulnerability harmed large businesses such as Microsoft, Apple, Google, as well as smaller companies. It is impossible to determine exactly how many companies were affected by the Log4j vulnerability as there is no public data available, but the extent of the impact is undoubtedly enormous.

Summary

The IT industry is expected to evolve rapidly over the next several years, this will be the result of the various advances in technology, including the adoption of more advanced automation tools and software platforms, the widespread use of cloud computing and the emergence of areas like artificial intelligence (AI) and machine learning (ML).

I kindly invite you to follow me on LinkedIn and my company https://www.uitware.com in order to keep up with the changing requirements of IT industry and DevOps culture for 2023.

Leave a comment